Temperature is one of the most measured quantities on Earth. It governs the safety of the food on your plate, the sterility of a hospital operating theatre, the accuracy of a pharmaceutical batch, and the precise cure of the concrete holding up a bridge. And yet, across all these industries, one inconvenient truth persists: a thermometer that hasn't been calibrated is little more than an expensive stick with numbers on it.

Calibration isn't glamorous. It doesn't make headlines. But it is the invisible backbone of measurement confidence — the reason a nurse trusts a reading of 38.6°C, the reason a pasteurisation plant doesn't send contaminated milk to supermarket shelves, and the reason your car engine doesn't destroy itself during a cold start. This editorial digs into what thermometer calibration actually is, how it works in practice, the methods and standards involved, and — crucially — why skipping it is a risk that most industries simply cannot afford to take.

What Calibration Actually Means (and What It Doesn't)

There's a widespread misconception worth clearing up immediately: calibration is not the same as adjustment, and it is definitely not the same as buying a new thermometer.

Calibration is, at its core, a comparison. A thermometer under test is compared against a reference standard of known accuracy at one or more temperature points. The results of that comparison — the differences between what the instrument reads and what the reference says — are documented in a calibration certificate. That's it. That's calibration.

What calibration tells you is the error profile of your instrument. Whether you then correct for that error — by adjusting the device, applying a correction factor in software, or simply factoring the offset into your process — is a separate decision, governed by your application's tolerances.

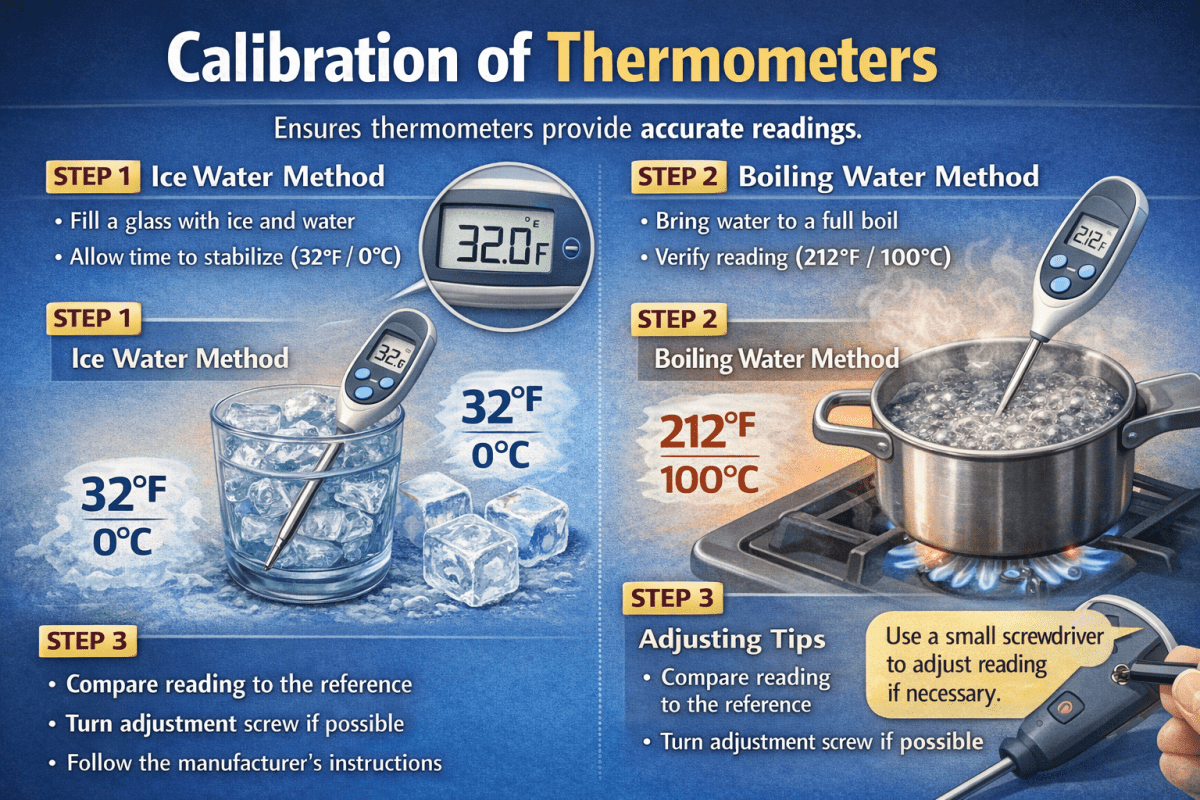

Adjustment, by contrast, is the physical act of aligning an instrument's output closer to the true value. Some thermometers have adjustment screws or software offsets. Others do not. Calibration without adjustment is still valid and useful; it simply means you're working with a documented, quantified offset rather than eliminating it.

The Reference Hierarchy: Traceable to What, Exactly?

Every calibration is only as good as the reference it's compared against. This is where the concept of metrological traceability enters the picture, and it matters enormously.

The global temperature measurement system is ultimately anchored to the International Temperature Scale of 1990 (ITS-90), maintained by national metrology institutes around the world. In the United States, that is the National Institute of Standards and Technology (NIST), located at 100 Bureau Drive, Gaithersburg, MD 20899, USA. In the United Kingdom, the authority is the National Physical Laboratory (NPL), Hampton Road, Teddington, Middlesex, TW11 0LW, UK. In Germany, the Physikalisch-Technische Bundesanstalt (PTB) at Bundesallee 100, 38116 Braunschweig, Germany holds this role. In Australia, it is the National Measurement Institute (NMI) at 1/153 Bertie Street, Port Melbourne, VIC 3207.

These institutions maintain fixed-point cells — devices that realise pure physical phase transitions, such as the triple point of water (0.01°C exactly) or the freezing point of zinc (419.527°C) — with uncertainties measured in thousandths of a degree. From these primary standards, traceability flows downward through accredited calibration laboratories to the instruments used in your factory, hospital, or kitchen.

The chain looks like this: Primary standard → Accredited calibration laboratory → Working reference thermometer → Your instrument. Break any link in that chain, and the claim of traceability breaks with it. A thermometer "calibrated" against an unchecked reference, however precise-looking, is not traceable — and in a regulated industry, that means it may as well not have been calibrated at all.

Methods of Calibration: Fixed Points, Comparison, and Everything In Between

There is no single universal method for calibrating thermometers. The right approach depends on the type of thermometer, the temperature range of interest, and the uncertainty required. The three principal approaches are fixed-point calibration, comparison calibration, and dry block calibration.

Fixed-Point Calibration

This is the highest-accuracy method and the one used by national metrology institutes. It exploits a fundamental property of pure substances: during a phase transition (melting, freezing, boiling), the temperature of a pure substance remains constant regardless of how much heat is added or removed. The triple point of water, for instance, occurs at 0.01°C ± 0.0001°C with virtually no ambiguity.

Fixed-point calibration requires specialised apparatus — sealed cells containing ultra-pure substances — and is typically performed only in primary laboratories or high-end accredited facilities. It is not the sort of thing done on a production line. However, it is the foundation on which everything else rests.

Comparison Calibration

This is the most common method used in industrial and laboratory settings. The thermometer under test is placed alongside a calibrated reference thermometer in a stable, uniform temperature environment — typically a stirred liquid bath or a dry block calibrator — and the two readings are compared at multiple temperature points across the working range.

The key requirements are temperature stability and uniformity. If the bath or block is unstable — drifting or varying by even a fraction of a degree across its volume — then both instruments are being compared under moving conditions, and the results are unreliable. Quality stirred liquid baths, such as those produced by Fluke Calibration (6920 Seaway Blvd, Everett, WA 98203, USA) or AMETEK Jofra (Ellekaer 6, 2730 Herlev, Denmark), can achieve stability and uniformity of ±0.01°C over their working volume, making them suitable for calibrating everything from industrial thermocouples to precision platinum resistance thermometers (PRTs).

Dry Block Calibration

Dry block calibrators use a heated (or cooled) metal block with precisely machined wells to hold sensor probes. They are portable, fast, and suitable for field calibration. The trade-off is that dry block calibrators typically achieve less uniformity than liquid baths — ±0.1°C to ±0.5°C depending on the model — which limits the uncertainty achievable.

For many industrial applications, this is perfectly acceptable. A process thermocouple measuring furnace temperature to ±5°C doesn't need bath-level calibration. But using a dry block to calibrate a precision thermometer for pharmaceutical use would be a category error.

Types of Thermometers and Their Calibration Characteristics

Not all thermometers age, drift, or respond to calibration in the same way. Understanding the specific characteristics of each type is essential for choosing appropriate calibration intervals and methods.---

Calibration Intervals: How Often is Often Enough?

The table above suggests intervals, but calibration frequency is not one-size-fits-all. It should be determined by a combination of four factors: the criticality of the measurement, the known drift characteristics of the instrument type, the conditions of use, and any regulatory requirements applicable to your industry.

A Type K thermocouple used at high temperatures in a steel furnace may need checking every three months because high-temperature cycling promotes grain boundary changes in the alloy, altering the thermoelectric output significantly. The same instrument sitting in a climate-controlled laboratory, used once a week at modest temperatures, might be fine for twelve months.

The ISO/IEC 17025 standard — the global benchmark for calibration and testing laboratories — requires that organisations establish and maintain calibration intervals as part of their documented management system. It does not prescribe specific intervals; it requires that intervals be determined and justified. The key phrase is measurement assurance: you need enough calibration evidence to maintain confidence in your measurements between calibrations.

Several national guides offer more prescriptive guidance. In the UK, the United Kingdom Accreditation Service (UKAS) — 2 Pine Trees, Chertsey Lane, Staines-upon-Thames, TW18 3HR — publishes application notes for specific industries. In the USA, the American Society for Quality (ASQ) and the National Conference of Standards Laboratories International (NCSLI) at 2995 Wilderness Place, Suite 107, Boulder, CO 80301, USA publish recommended practices for calibration programme management.

The Ice Point Check: Calibration's Cheapest Ally

Between formal calibrations, the ice point check is the most practical tool in a quality-conscious technician's arsenal. The ice bath — made from crushed ice and just enough water to allow a slush consistency — realises 0°C with an uncertainty of around ±0.02°C when properly prepared. It costs nothing beyond the ice.

The procedure is simple: fill a vacuum flask or insulated container with finely crushed ice, add just enough clean water to fill the voids, allow equilibration for two minutes, then insert the thermometer probe to the appropriate immersion depth and allow the reading to stabilise. A correctly functioning probe thermometer should read 0.0°C ±0.1°C. Anything outside that range is a flag for investigation.

Ice point checks don't replace calibration — they can't characterise the full temperature range of an instrument or produce a traceable certificate — but they provide an inexpensive, frequent sanity check that can catch gross failures between calibration events. In food service environments regulated by HACCP (Hazard Analysis and Critical Control Points) requirements, daily ice point checks for probe thermometers are widely recommended and in many jurisdictions required.

Regulatory and Industry Frameworks: Where Calibration Becomes Mandatory

Several industries don't treat calibration as optional. They treat it as a regulatory obligation, with traceability requirements embedded in law, standard, or contract.

In pharmaceuticals, the US Food and Drug Administration (FDA) 21 CFR Part 211 requires that instruments used in production and testing be calibrated at suitable intervals against standards traceable to national or international standards. The European Medicines Agency (EMA) guidelines and the EU GMP (Good Manufacturing Practice) framework carry equivalent requirements. Facilities subject to these rules — including every major pharmaceutical manufacturer — maintain detailed calibration schedules, equipment logs, and calibration certificates as part of their quality management systems.

In food safety, the Codex Alimentarius framework and national implementations of HACCP require that temperature-measuring devices be verified and, where necessary, calibrated at defined intervals. In the UK, the Food Standards Agency (FSA) at Floors 6 and 7, Clive House, 70 Petty France, London, SW1H 9EX, sets guidance on probe thermometer verification for food businesses.

In medical devices and clinical settings, ISO 15189 (for medical laboratories) and country-specific clinical governance frameworks mandate calibration of temperature measurement devices used in diagnostic and patient care contexts.

In aerospace and defence, AS9100 (the quality management standard for the aviation, space, and defence industries) incorporates requirements for monitoring and measuring equipment calibration that are at least as stringent as ISO/IEC 17025, often more so.

Common Calibration Errors That Quietly Skew Results

Calibration is not error-proof. Done carelessly, it produces a certificate that provides false confidence. The most common mistakes made during thermometer calibration are worth examining in detail.

Insufficient immersion depth is perhaps the most pervasive. A thermometer probe has a sensing element — a platinum resistor, a thermocouple junction — located at a specific point, usually near the tip. If the probe is not immersed deeply enough in the reference medium, heat conduction along the stem will cause the indicated temperature to deviate from the true value at the sensing element. For most industrial probes, an immersion depth of at least 15 times the probe diameter is a reasonable minimum. For precision work, the immersion depth specified by the manufacturer must be strictly observed.

Bath or block non-uniformity is another source of hidden error. Even quality calibration baths have temperature gradients — small, but real. If the reference thermometer occupies one location in the bath and the test thermometer another, and there is a gradient between them, the comparison is corrupted. Best practice is to position the reference and test probes at the same depth, as close together as practical, in the most uniform zone of the bath.

Inadequate equilibration time is a third common failure. Stainless steel probe assemblies have thermal mass. When inserted into a bath at a different temperature, they take time to reach true thermal equilibrium. Rushing a reading — particularly with large-bore probes or probes inside protective sheaths — produces artificially biased results. Waiting for reading stability (typically defined as less than 0.01°C variation over 60 seconds) before recording is standard practice in rigorous calibration.

Reference thermometer drift, undetected is perhaps the most insidious problem of all. A calibration is only as good as the reference it is compared against. If the reference thermometer has drifted since its last calibration but is still within its nominal range, every calibration performed against it is in error by that drift amount — and the technician has no way of knowing. This is why reference thermometers in calibration laboratories are subject to their own rigorous calibration schedules, and why the uncertainty of the reference contributes to the uncertainty of every calibration performed with it.

Reading a Calibration Certificate: What the Numbers Mean

A calibration certificate is not just a piece of paper with a stamp on it. It contains actionable information that should be understood by whoever uses the instrument.

The key elements to look for are: the measurement points (the temperatures at which the instrument was checked), the reference values at those points, the indicated values from the instrument under test, the calculated errors (indicated minus reference), and — critically — the expanded measurement uncertainty, expressed as a value in degrees and a coverage factor (typically k=2, representing approximately 95% confidence).

If a certificate states that at 100°C your thermometer reads 100.4°C with an expanded uncertainty of ±0.2°C (k=2), this means: the true value at that point lies within the interval 100.2°C to 100.6°C with approximately 95% confidence. Your instrument over-reads by 0.4°C at that point. Whether that matters depends on your application's tolerance.

Laboratories that issue calibration certificates should be accredited to ISO/IEC 17025 by a recognised accreditation body — UKAS in the UK, DAkkS (Deutsche Akkreditierungsstelle GmbH, Spittelmarkt 10, 10117 Berlin, Germany) in Germany, A2LA (American Association for Laboratory Accreditation, 5202 Presidents Court, Suite 220, Frederick, MD 21703, USA) in the United States. Accreditation means an independent body has verified that the laboratory has the technical competence, equipment, and systems to produce reliable calibrations.

The Future of Thermometer Calibration: On-Board Diagnostics and the Uncertainty Budget

The calibration landscape is shifting. Modern process instrumentation increasingly incorporates built-in diagnostics — the ability to detect degradation, contamination, or drift through self-assessment algorithms rather than waiting for the next scheduled calibration. HART-communicating smart transmitters, for instance, can report loop integrity indicators that flag potential sensor issues between calibrations.

More fundamentally, the redefinition of the SI units in 2019 has opened the door to primary realisations of temperature that do not require physical fixed-point cells. Acoustic thermometry and Johnson noise thermometry — techniques that derive temperature directly from fundamental physical constants — are moving from laboratory curiosities toward practical instruments. When these mature sufficiently, the traceability chain could compress dramatically, potentially allowing calibration of working instruments directly against primary standards without intermediate transfer standards.

For now, though, the basics remain the basics: a calibrated reference, a stable temperature environment, documented comparison results, a traceable certificate, and a programme that ensures calibration happens at intervals appropriate to the criticality of the application. None of this is glamorous. But it is, as anyone who has had a product recall, a failed audit, or a patient safety incident traced back to a faulty temperature reading will attest, absolutely essential.

Conclusion: Calibration Is Not a Cost — It's a Control

The argument for calibration is sometimes framed in terms of compliance — you do it because a standard or a regulator requires it. That framing is incomplete. The deeper reason to calibrate thermometers is that measurement quality is process quality. A temperature reading you can't trust is not a data point; it's noise masquerading as information.

Industries that treat calibration as a genuine quality tool — rather than a box-ticking exercise — don't just avoid failures. They accumulate evidence about instrument behaviour over time, learn where their processes are most sensitive, and make better decisions about when to replace aging sensors rather than calibrating them into obsolescence.

Temperature measurement underpins too much of modern industrial and clinical life for its accuracy to be taken on faith. The numbers on the display earned that trust through a chain of comparisons stretching back to primary standards maintained by national laboratories. Calibration is how that chain stays intact — one documented comparison at a time.

For further reading on traceability frameworks and calibration best practices, consult the documentation published by NIST (nist.gov), NPL (npl.co.uk), BIPM (bipm.org), and your relevant national accreditation body.