Temperature is one of the most intimate measurements in human life. It tells you whether your child has a fever, whether your roast is done, whether the chemical reaction in the lab is proceeding safely, or whether the outside air is worth braving without a coat. Yet the instrument we use to make that measurement — the humble thermometer — is something most of us have never really thought about. We shake it, we read it, we move on.

That's a shame. Because thermometers are one of the most elegant engineering stories in science, spanning four centuries of ingenuity, from Galileo's crude water-filled glass tube to the digital infrared sensors now built into smartphones.

Let's fix that.

The Core Idea: Everything Expands When Hot

Before we get into designs and types, let's establish the physical truth that almost every thermometer is built on: matter expands when it gets warmer and contracts when it gets cooler. This is called thermal expansion, and it happens in solids, liquids, and gases — though at very different rates.

The trick of building a thermometer is finding a material whose expansion is predictable, consistent, and readable. Early instrument-makers discovered that certain liquids — mercury, alcohol — expand uniformly and reliably enough that you can calibrate a scale beside them and use the height of the liquid column to read off a temperature.

That single idea, "heat makes things bigger," is still at the heart of most thermometers made today.

The Mercury-in-Glass Thermometer — The Classic Model

The mercury thermometer, perfected by Daniel Gabriel Fahrenheit in the early 1700s and manufactured for three centuries, is the design most people picture when they hear the word "thermometer."

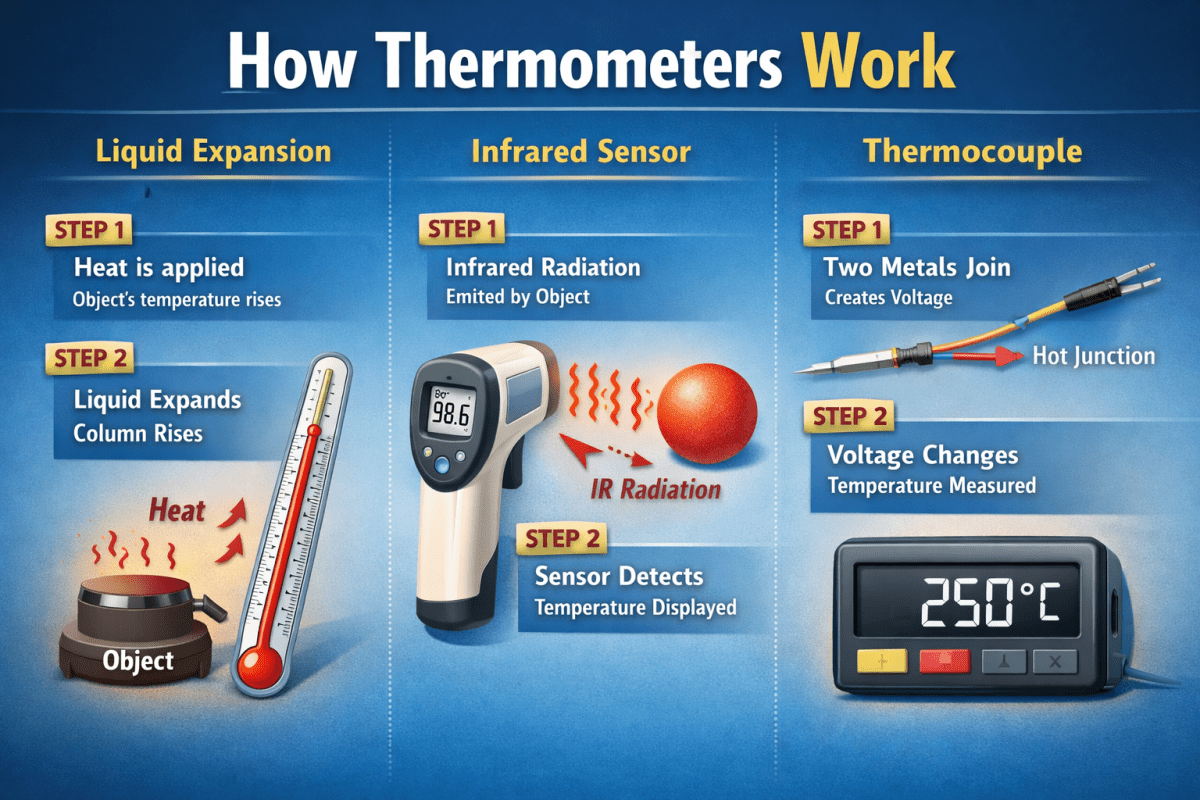

At its core, it's disarmingly simple: a thin glass capillary tube sealed at the top, with a small glass bulb at the bottom filled with mercury. The bulb and tube together contain a fixed, known amount of liquid metal. When temperature rises, mercury expands more than the surrounding glass, so the excess has nowhere to go but up the narrow tube. Because the tube is so narrow, even a tiny volumetric expansion of the mercury produces a large, readable movement of the liquid column.

The scale marked on the glass was determined by calibration — Fahrenheit placed his thermometer in ice-cold saltwater for the 0 mark, used a mixture of ice and water for 32°F, and human body temperature for 96°F (later revised to 98.6°F as calibration methods improved). Celsius, working a few years later, simply reversed the logic: 0° for the freezing point of pure water, 100° for the boiling point.

Why mercury specifically? It has a wide liquid range (−39°C to 357°C), a metallic sheen that makes it easy to read, a low tendency to stick to glass, and an unusually uniform rate of expansion. It's also dense, which matters for accuracy in a narrow tube. The downside, of course, is that mercury is toxic, which is why these thermometers have been phased out of hospitals and households worldwide. Most were replaced by alcohol-based alternatives (which are dyed red or blue for visibility) or by the digital thermometers now in every medicine cabinet.

How Bimetallic Strip Thermometers Work

A different physical principle governs the bimetallic strip thermometer — and this one is almost satisfying enough to be magic.

Two metals, typically brass and steel, are bonded together into a single strip. These metals expand at different rates when heated. Brass expands more readily than steel. So when the strip heats up, the brass side tries to grow longer than the steel side — but it can't, because they're fused. The only way the strip can accommodate the mismatch is to bend. And it bends in a predictable direction: toward the metal that expands less.

That bending motion can be mechanically linked to a needle on a dial. Heat the strip and the needle swings one way; cool it and the needle swings back. The result is the traditional dial thermometer you've seen on ovens, on barbecue grills, and in the thermostats on walls. They're rugged, require no batteries, and are accurate enough for most practical purposes.

The same principle is used in thermostats to control central heating. When the room heats up, the strip bends far enough to physically break an electrical circuit, shutting off the furnace. When the room cools, the strip straightens, closes the circuit, and the heat comes back on. Simple, durable, brilliant.

Resistance Thermometers and Thermistors

Here we move into the territory of modern electronics. Metals change their electrical resistance as temperature changes. For most pure metals — platinum is the best example — resistance increases predictably and linearly with temperature. This makes platinum resistance thermometers (also called RTDs, for Resistance Temperature Detectors) extraordinarily accurate. They're the gold standard for industrial and scientific temperature measurement, capable of accuracy to within hundredths of a degree.

A Resistance Temperature Detector works by passing a small electrical current through a platinum element and measuring the voltage. From the voltage and the current, you calculate resistance. From the resistance, using well-established equations, you calculate temperature. The whole process happens in milliseconds.

Thermistors work on a similar principle but use semiconductor materials — typically metal oxides — instead of metals. Semiconductors respond to temperature much more dramatically than metals do, so thermistors are extremely sensitive. The tradeoff is that their response is non-linear, which requires more complex calibration curves. Thermistors are the sensors in most digital clinical thermometers, in the temperature sensors inside computers, and in countless industrial and consumer devices.

Thermocouples — When Two Metals Meet

Thermocouples are the workhorses of high-temperature measurement and one of the more counterintuitive devices in instrumentation.

When you join two different metals at a point and heat that junction, a small voltage develops between them. This is called the Seebeck effect, discovered in 1821. The voltage depends on the temperature at the junction. If you measure that voltage (typically a few millivolts), you can calculate the temperature.

Thermocouples are prized because they can measure temperatures that would destroy any liquid-expansion or resistance thermometer. Industrial thermocouples are used inside furnaces, jet engines, and nuclear reactors — environments that reach thousands of degrees. They're also fast, rugged, and cheap to manufacture.

The downside is relatively low accuracy compared to RTDs. For most factory and industrial applications, that's perfectly acceptable. For scientific calibration work, you'd reach for something more precise.

Infrared Thermometers — Reading Temperature Without Touching It

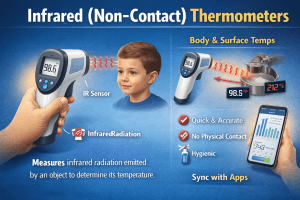

Every object above absolute zero emits infrared radiation. And critically, the intensity and wavelength of that radiation depends on the object's temperature. This is the physical basis for infrared thermometry — also called non-contact or radiation thermometry.

An infrared thermometer works by collecting infrared radiation from a surface using a lens and focusing it onto a detector called a thermopile — essentially a large array of thermocouples wired in series. The thermopile generates a voltage proportional to the infrared energy it receives. Electronics convert that voltage into a temperature reading displayed digitally, all in under a second.

The familiar forehead-scanning thermometers that became ubiquitous during the COVID-19 pandemic work on exactly this principle. They aim at the temporal artery on the forehead, collect infrared radiation emitted by the skin, and calculate temperature from the signal — all without touching the patient.

Infrared thermometers are also standard tools in professional kitchens, where chefs check pan temperatures before searing; in building inspections, where they detect heat leaking through walls or poorly insulated windows; and in HVAC maintenance, where technicians diagnose overheating electrical components.

Digital Thermometers and Smart Sensors

Most thermometers sold today are digital — which means they use one of the sensor technologies above (almost always a thermistor or an RTD) but add a microprocessor that converts the raw electrical signal into a displayed number.

The advantage over analog instruments is repeatability and precision. There's no human error in reading a dial or estimating where a liquid column sits between two scale marks. The sensor produces a voltage, an analog-to-digital converter turns that voltage into a number, and the microprocessor applies calibration math to output a temperature reading.

Modern smart sensors go further. They connect via Bluetooth or Wi-Fi, log data over time, send alerts when temperature exceeds a threshold, and integrate with home automation systems. The meat probe stuck in your Sunday roast that sends a notification to your phone when the internal temperature hits 74°C is running the same fundamental physics as a mercury thermometer from 1720 — just with considerably more electronics on top.

Gas Thermometers and the Absolute Scale

One more design deserves mention, less because it's common and more because it's historically important and physically fundamental.

Gas thermometers measure temperature by measuring the pressure of a fixed volume of gas — or conversely, the volume of a gas at fixed pressure. The ideal gas law tells us that pressure, volume, and temperature are linked: at constant volume, pressure rises linearly with temperature. If you extrapolate the pressure of a gas downward toward zero, the temperature at which pressure would reach zero is −273.15°C. This is absolute zero, the coldest possible temperature, and it's the origin point of the Kelvin scale.

Gas thermometers were used in the 19th century to establish the absolute temperature scale and to calibrate the other instruments. Today they're still used in national metrology institutes — places like the National Institute of Standards and Technology (NIST) at 100 Bureau Drive, Gaithersburg, MD 20899, USA, or the National Physical Laboratory at Hampton Road, Teddington, Middlesex TW11 0LW, UK — where the international standards for temperature measurement are defined and maintained.

Calibration — The Science of Getting It Right

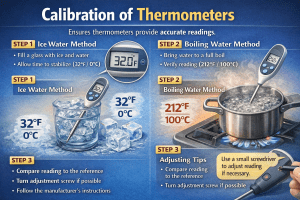

A thermometer is only as good as its calibration. Even a perfectly designed sensor will drift over time, especially if it's subjected to extreme temperatures, physical shock, or chemical contamination. This is why calibration is a serious industry.

In everyday terms, calibration means checking your thermometer against a known temperature reference — an ice bath (0°C), boiling water (adjusting for altitude and atmospheric pressure), or a certified reference thermometer — and adjusting the reading accordingly.

At the highest levels of precision, calibration happens against the International Temperature Scale of 1990 (ITS-90), the international agreement that defines temperature in terms of 17 fixed points — the triple point of water, the freezing point of gold, the boiling point of helium — each corresponding to a precisely defined thermodynamic state. Laboratories that perform this work include the Bureau International des Poids et Mesures (BIPM) at Pavillon de Breteuil, F-92312 Sèvres Cedex, France, and PTB (Physikalisch-Technische Bundesanstalt) at Abbestraße 2–12, 10587 Berlin, Germany.

Why Accuracy Varies So Much

You might have noticed from the table that accuracy varies wildly across thermometer types. This isn't an accident — it reflects fundamental tradeoffs in physics and engineering.

A thermocouple that survives inside a jet turbine running at 1,400°C can't also be a sub-degree precision instrument. The voltages it produces are tiny and easily contaminated by electrical noise. The environment it works in would destroy a platinum RTD. So engineers accept lower absolute accuracy in exchange for a sensor that won't melt.

Conversely, a platinum RTD in a pharmaceutical manufacturing line is measuring batch temperatures where a 0.5°C deviation could compromise a drug's efficacy. Accuracy is everything; the sensor never gets near a furnace.

Infrared thermometers occupy a special case. Their accuracy depends heavily on a property of the target surface called emissivity — a measure of how efficiently it radiates infrared energy relative to a perfect "black body" emitter. Shiny metallic surfaces have low emissivity and can give wildly wrong readings unless you correct for it. Human skin, fortunately, has an emissivity close to 1, which is why forehead thermometers work reliably on people.

The Future of Thermometry

Thermometry isn't standing still. Quantum thermometers — using the quantum properties of nitrogen-vacancy centers in diamond to detect nanoscale temperature variations — are now in university laboratories, with potential applications in mapping temperatures inside living cells without any probe insertion at all.

Photonic thermometers, being refined at institutions like NIST, measure temperature through the wavelength of light in a precision optical cavity, with accuracy better than 0.001°C, potentially replacing platinum RTDs for top-tier calibration work.

In consumer devices, the trend is relentless integration: temperature sensors built directly into wearable rings, patches, and smartwatches, continuously streaming data to health apps. The Apple Watch already uses a wrist temperature sensor to track circadian rhythms and ovulation cycles. Skin-worn continuous temperature patches are entering clinical trials for fever monitoring in hospital patients without the disruption of repeated manual checks.

Four Centuries of Trying to Read the World

Galileo's thermoscope, built in Florence around 1592, was nothing more than a sealed glass tube with a liquid column that rose and fell with room temperature, stuck in a vessel of water. It had no scale. It couldn't even tell you the actual temperature — only whether it was hotter or colder than before.

Today, a sensor the size of a grain of rice can measure temperature to within a tenth of a degree, transmit the result wirelessly, log it to a server, and trigger an alert if it drifts outside a defined range. The physics has barely changed. Heat still makes things expand, metals still change their resistance, warm objects still radiate infrared. What changed is our ability to measure those effects with ever-greater precision and to build that measurement invisibly into the world around us.

The next time you point a forehead thermometer at your child or glance at the temperature readout on your oven, you're using a direct descendant of Fahrenheit's mercury tube, Seebeck's junction, and the platinum resistance measurements that defined the international temperature scale. That's not a bad lineage for an instrument most of us take entirely for granted.